Self-Supervised Learning for Tactile Classification

Tactile sensing is essential for robust material classification, yet supervised approaches require costly labeled data. This project explores self-supervised learning with a shared TacNet-II encoder, comparing autoencoders, MAE, and CNN-JEPA, and shows improved accuracy and sample efficiency on downstream tactile classification.

Links

Overview

Efficient tactile sensing is critical for robots that must grasp, manipulate, and identify materials in real-world environments. Modern supervised models such as TacNet-II achieve high accuracy, but rely on large labeled datasets that are expensive to collect.

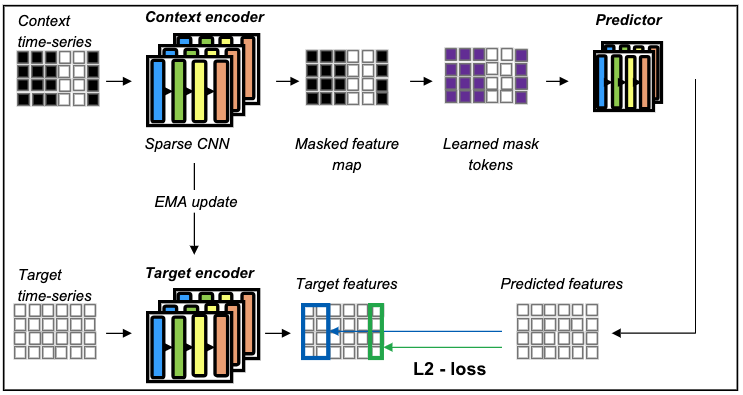

To reduce this dependency, the work investigates self-supervised pretraining for tactile time-series data, adapting CNN-JEPA and comparing it with MAE and classic autoencoders. The study analyzes how different pretraining and downstream splits affect performance and shows where predictive representation learning provides the most benefit.