A Multi-objective Approach to Safe Reinforcement Learning for Smart Grids

Building energy management commonly uses RL controllers, but safety constraints are often treated as a single penalty. This work casts the problem as multi-objective RL with vector-valued rewards and evaluates MORL algorithms on a smart household scenario, showing improved Pareto trade-offs between cost and safety.

Links

Overview

Renewable-heavy grids need energy management strategies that can cope with uncertainty in weather and price forecasts. Model Predictive Control can be optimal with perfect forecasts, but its performance drops in practice. RL controllers have shown cost advantages, yet struggle to satisfy hard safety constraints such as battery charge bounds.

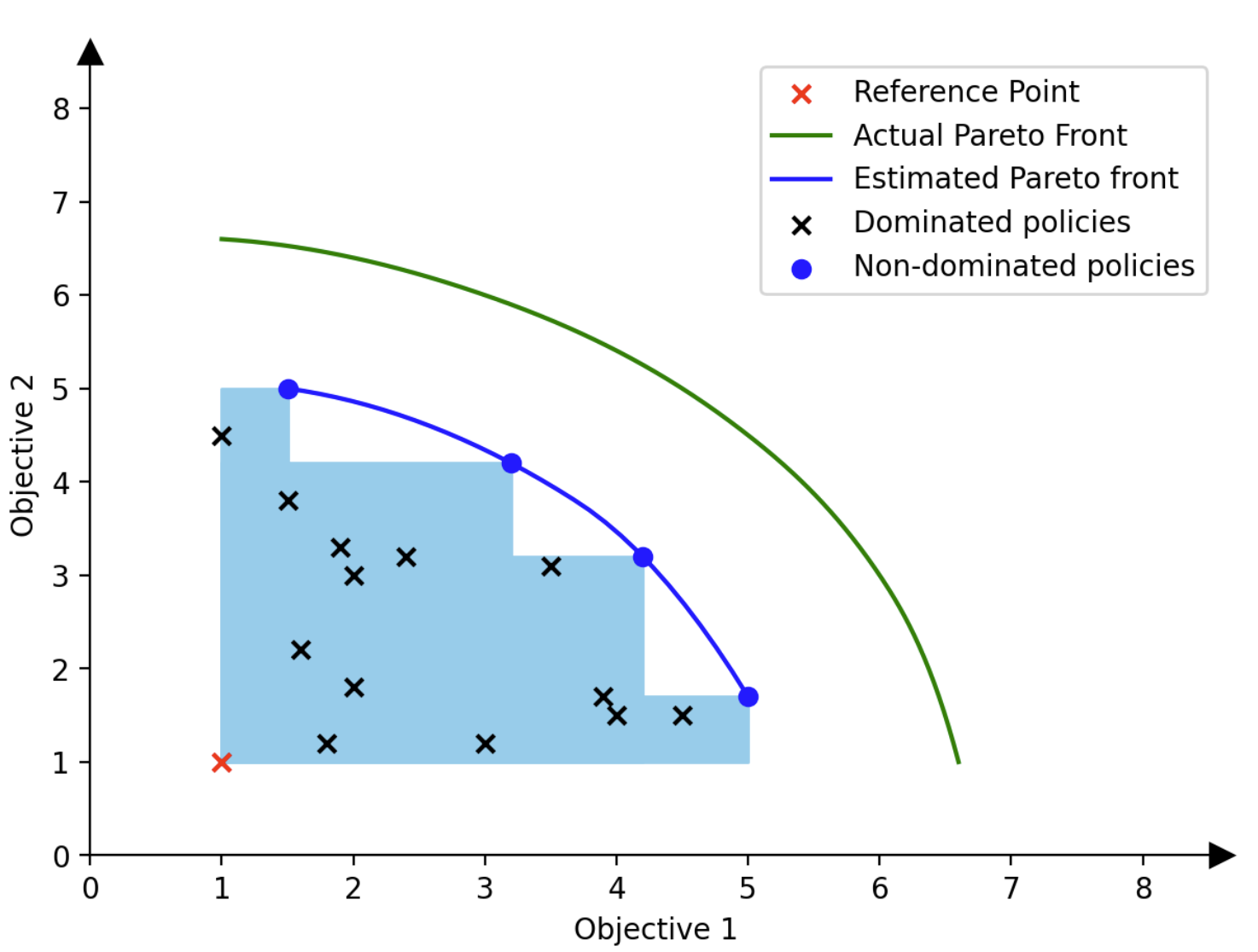

Traditional approaches add safety violations as a penalty term, but this fixes the trade-off in advance and makes retuning expensive. This project frames the task as true multi-objective RL, separating cost and safety objectives and enabling flexible selection of operating points without retraining.