LVSM-VAE: Transformer-Based Novel View Synthesis in Latent Space

This project adapts the Large View Synthesis Model (LVSM) to operate inside a VAE latent space, reducing inference cost while preserving novel view quality and enabling longer context sequences at a fixed compute budget.

Links

Overview

LVSM-VAE encodes reference images into a compressed latent space and feeds patchified latent tokens plus ray-based camera embeddings into a decoder-only transformer. By adjusting intrinsics for the latent resolution, the model preserves geometric consistency while predicting novel views directly in latent space before decoding back to RGB.

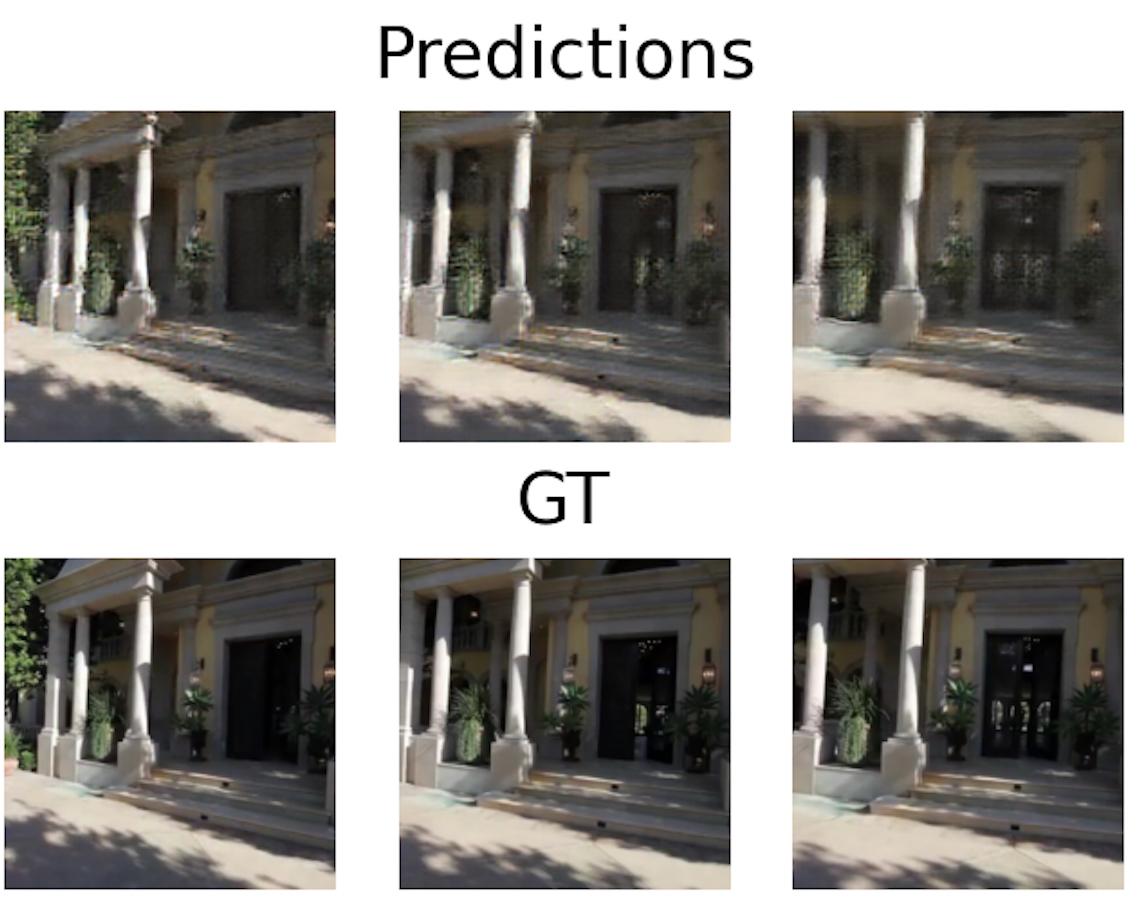

Experiments on RealEstate10k show competitive PSNR and SSIM relative to pixel-space LVSM baselines, with substantially lower inference memory and runtime. Scaling the number of reference views improves quality, and fine-tuning with larger context sizes yields the best trade-offs under comparable compute constraints.